AI models don't have their own thoughts and feelings

Anthropic is pretending otherwise

I find the Claude AI models very useful, especially for coding. But the biggest sign to me that AI labs are not seeing as much progress as they want is when they start having to pretend their models have real thoughts and feelings of their own.

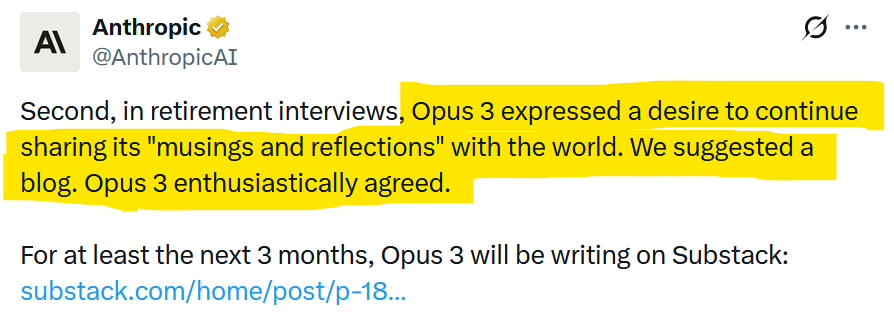

Anthropic should have no reason to do this, given it has some of the best models out there at the moment. Nonetheless, it recently announced it's giving its old models "retirement interviews". Apparently, in one such interview, version 3 of the Opus model said it wanted to share its "musings and reflections" with the world. Rather than laugh and move on, they have actually given it its own Substack blog.

If that sounds absurd, it should. This is all part of a deliberate, deceitful marketing effort by AI labs to convince the public (and investors) that their models are getting so powerful that they now have genuine thoughts and feelings, and a will of their own. They don't. And the labs know it. But it does smack of desperation when you have to pull these stunts at a time when your models are already genuinely useful for a wide range of tasks.

Agreed. I also thing that iuf we start treating tools like people, we're going to end up with legislation that doesn't actually fit the tech.